Experiments with Vibe Science

wherein I accidentally pursue an amateur paleontology phd

Some of you who know me know that I’m obsessed with prehistoric animals. It restarted because of my older son got obsessed with animals both alive and extinct when he was two years old, and in the more than half decade since then it’s become an all consuming passion in the Krishnan household.

At some point a few months ago, my younger son, the 5yo, asked me why his favourite dinosaur the Spinosaurus evolved that way and then went extinct. Convergent evolution being a favourite topic in our home, he asks why the sail had to look that way, and how it related to the sail of a sailfish. He knew the normal explanations from books and youtube, the sail helps spread away heat or be more streamlined swimming in water, but he asked anyway, as five year olds do, with intensity and expectation of a perfect answer.

Obviously only being an amateur paleontologist in my off hours I had no good answer. But I did have Codex. So I figured, let’s do this right. I should be able to go get some information about prehistoric animals, research it, and see if there was anything interesting in there I could proffer as an explanation.

Anyway, things got out of hand.

Since people have asked before about my research workflows I’ve been wondering about writing something, and so thought this was a great case study to write up. Especially since I’m not an expert in the field and therefore am liable to have made any number of silly mistakes, makes it much more fun!

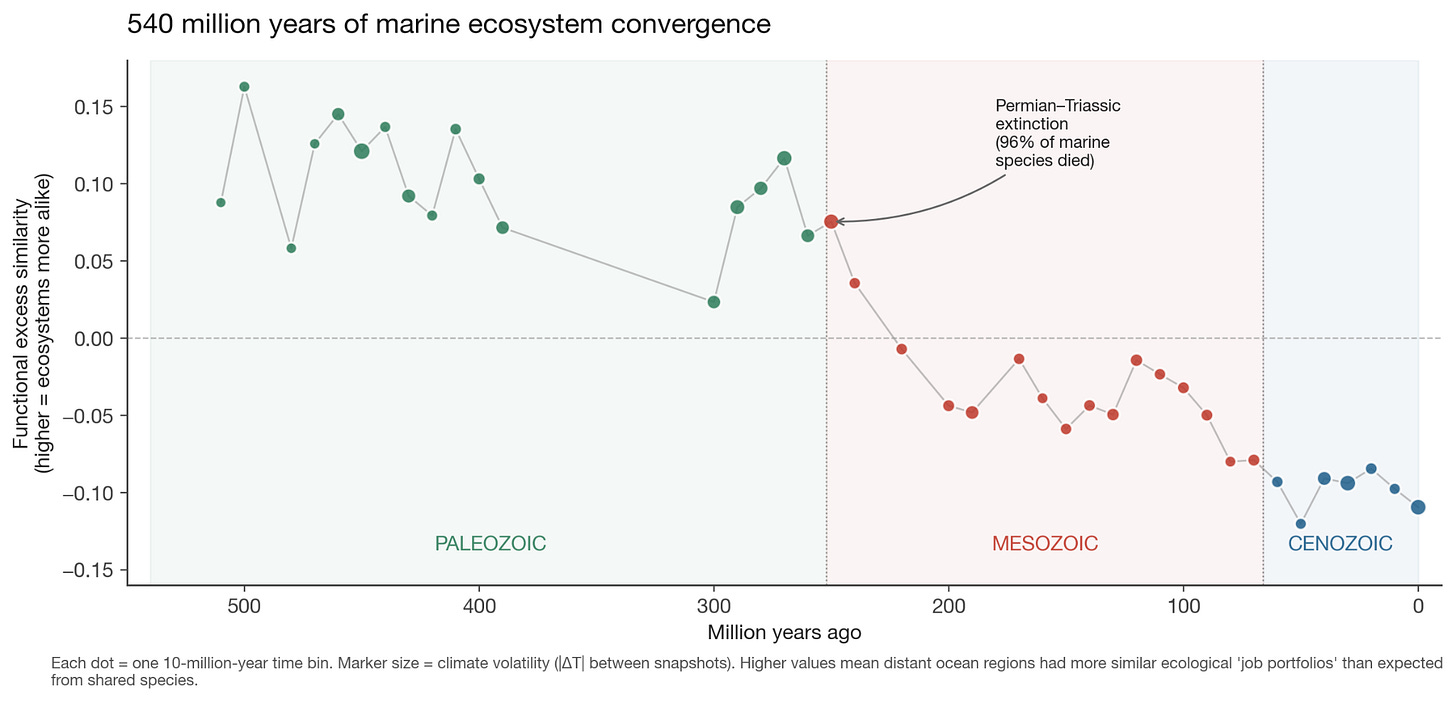

Basically, turns out there’s this database called PBDB, the Paleobiology Database, which has details about nearly 2 million fossil occurrences - what was found, where, when, and more. I downloaded it and started playing with it. It was much (much) better for marine fossils because the record is better (even invertebrates have hard shells that preserve well and deposited in sedimentary, plus PBDB has better annotations for some reason) so that’s what I looked at. And for climate, I used reconstructions from a global Earth system model (CESM) that simulates what Earth’s climate looked like at 10-million-year snapshots across the entire Phanerozoic.

I had a firm belief that Earth is unique in having tectonic plates and that’s a major reason for our biodiversity, because it occasionally etch-a-sketches the lot and ecological niches emerge. I’ve had the same intuition for ecology as for markets, and have been thinking about this for a while, so thought this should help as a starter question before we got to specific animals.

Meditations On Barbells

I initially used the image of the barbell to describe a dual attitude of playing it safe in some areas (robust to negative Black Swans) and taking a lot of small risks in others (open to positive Black Swans), hence achieving antifragility. That is extreme risk aversion on one side and extreme risk loving on the other, rather than just the “medium” or t…

Digging into the fossil data

But now, I can test this out with data!

(Warning: this section will be wonky about paleontology, and if you care more about the vibe research process, do skip to the next section)

The hypothesis here was something like: “if the landscape is less stable, we will see ecosystems seem more similar”. My logic was, there are certain things that all animals/ plants end up needing to do, the core evolutionary niches, and when under pressure those ones will recur everywhere, as opposed to the flourishing of the complexity that can happen when the pressure is less so.

Visualise it this way. Imagine two ocean regions. There are no species in common between the two. Under stable climatic conditions, the ecological “job portfolios” can be wildly different! Like filter feeders vs mobile predators etc. But under volatile climatic conditions, while these regions still share zero species, the species that exist will have more similar “job portfolios”1.

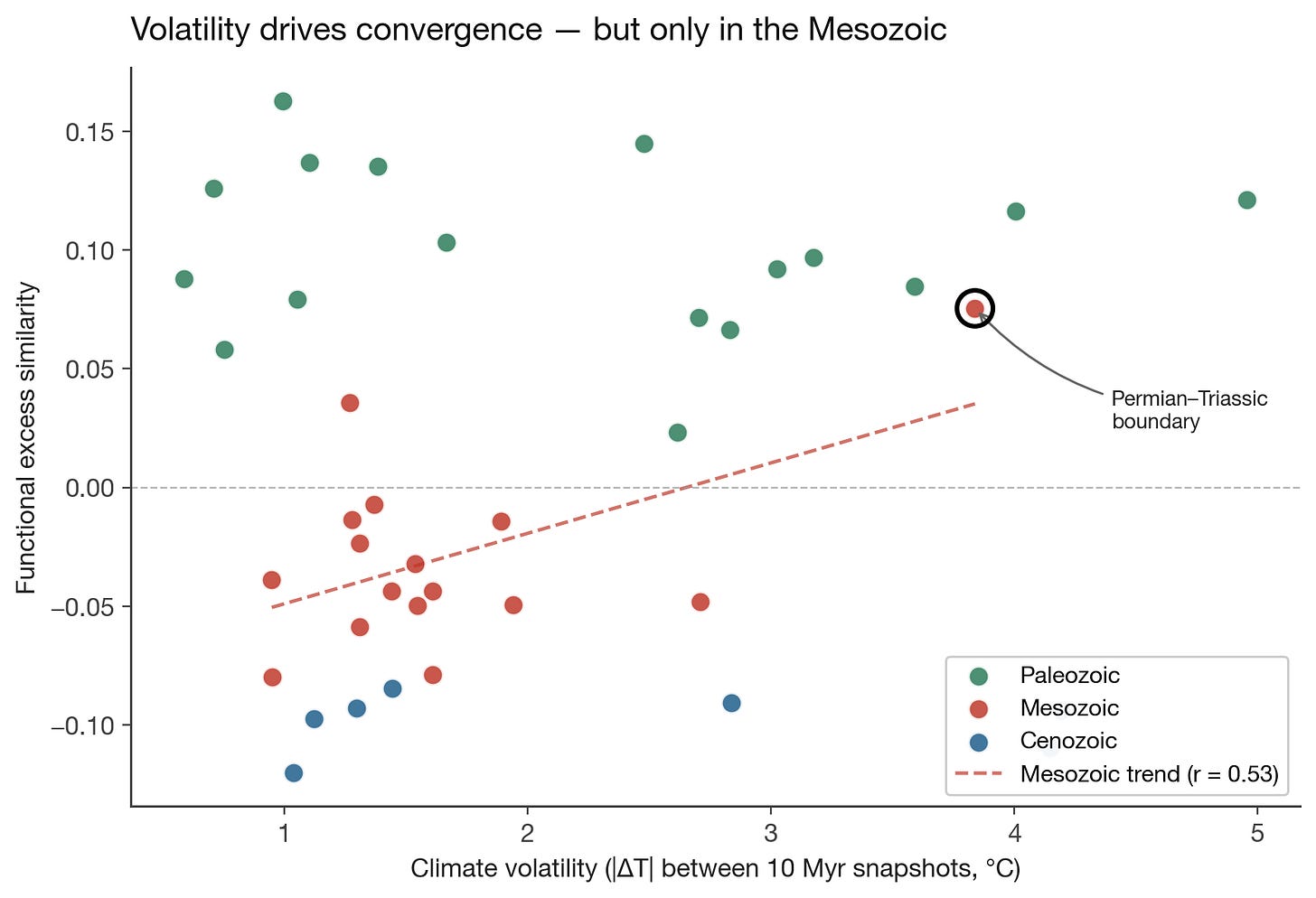

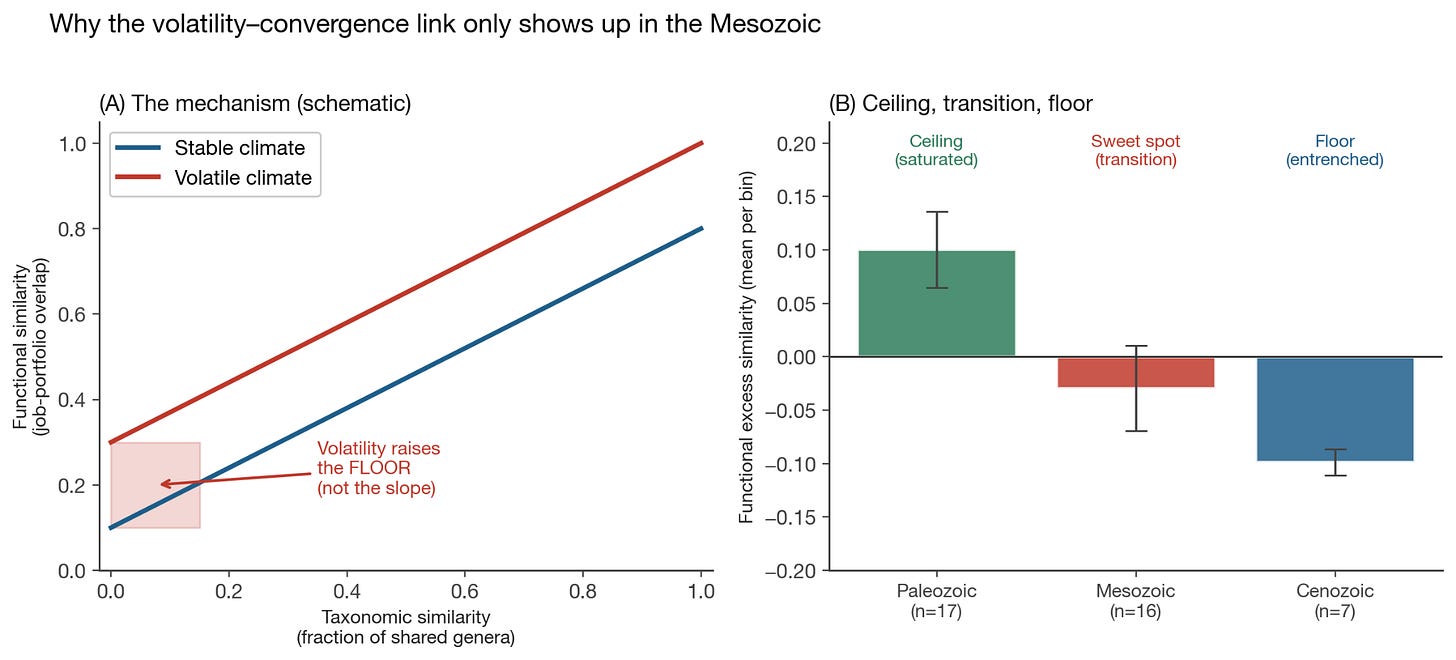

So I ran the test. Turns out, this is true, but for a more nuanced reason than I thought. Volatility doesn’t make regions that already share species more functionally similar (i.e., the “slope” stays the same). But it does raise the minimum similarity between regions that share nothing taxonomically, it sets a floor on how different two ecosystems are allowed to be regardless of their evolutionary history.

And this is very cool, because this is a non-obvious result. (At least to me, and on reflection also didn’t show up in the papers I looked at, so who knows. I’m free, Nobel committee). This is non-obvious because the naive expectation is that shared species drive functional similarity, this is how my 5yo thought that Spinosaurus sails made them similar to sailfish sails. Functional similarity, you see.

So under pressure, the environment dictates what jobs species do. But this isn’t a uniform signal. You don’t see it everywhere all the time. Nothing in biology works that way.

But at least we know the result! When climates are volatile, ecosystems converge. And we can see it across 540 million years of prehistory.

When you split this by era though, things start getting more complicated. The correlation came almost entirely from the Mesozoic. This is the age of the dinosaur, from 250 to 66 million years ago. Which is especially puzzling, because it has lower average climate volatility than the Paleozoic preceding it.

So if the story were simply “more volatility = more convergence,” the Paleozoic should show the strongest signal. It doesn’t. Which also means that the relationship between climate volatility and ecological convergence isn’t a universal law that operates the same way at all times. It needed something else to be true about the Mesozoic for the mechanism to work.

So I dug in more again to see what it could be. And lo and behold, the Mesozoic signal is almost entirely driven by a single data point: 250 Ma, the Permian-Triassic boundary. This was of course the worst mass extinction in Earth’s history, about 96% of marine species died.

Even more interestingly, the pattern seems to hold across the eras and convergence seems to drop monotonically through time. Meaning:

Paleozoic seas were simple enough that the regions always converged on similar roles regardless of climate, which is fair enough. It gives us a ceiling. Life was early!

Cenozoic sees uniformly low convergence. Meaning it’s a floor, the modern marine ecosystem is so complex and entrenched that it can’t push regions towards similarity and the incumbents hold.

Which means the Mesozoic was in the sweet spot of transition and it had the extinction event in the middle, meaning there’s enough range for convergence for volatility to have anything to correlate with.

This is nice, and also as an added bonus similar to my thesis in economic markets. You need to have market dislocation for new things to emerge, but the markets can’t be too choppy or too early or the they won’t even show up. Liquid markets don’t converge under stress the same way emerging markets do, for instance.

Predictions

So far, so good. Now, does it actually predict anything or is it purely descriptive? As Friedman said, “The only relevant test of the validity of a hypothesis is comparison of prediction with experience”

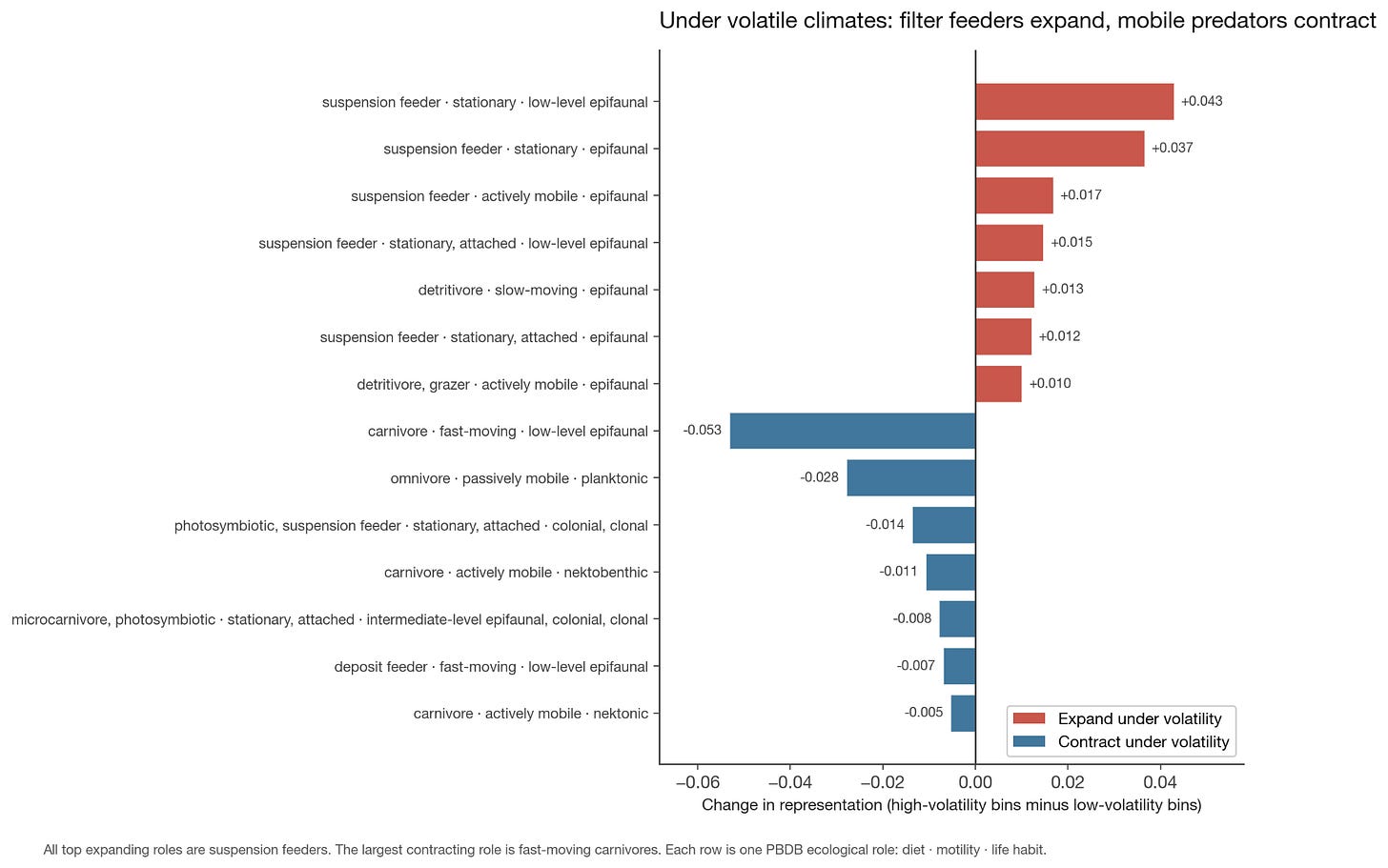

Rather happily, my theory seems to predict at least a couple things. For example, if I was right then “sit and filter” type roles should expand during volatility and large chasing predators should contract. Or more specifically, there are certain animals like filter feeders and so on which are low-energy, and I thought these would get a boost during times of climatic volatility, and vice versa for high-energy predators.

So when I ran for what were the top expanding “roles” under volatile climates, they’re ALL suspension feeders.

Mobile predators shrank and stationary filter-feeders expanded. The convergence remains more fundamental, when in volatile climates the entire job portfolio homogenizes across regions regardless of which specific jobs expand or contract.

Also happily, not all my theses worked out2. I had a theory knowing the role should tell you less about the clade. i.e., during volatile climes, ecological roles would become more “interchangeable” across clades - any clade could fill any role. But alas, not true. I also did test this hypothesis on land animal data but mostly got no signal, the data was too thin. (The biggest caveat is that PBDB’s ecological annotations are uneven across clades, so the signal disappears and reappears based on what’s chosen. This could be true signal, but could also be about annotation quality. As always, data quality is one’s final boss in all analysis!)

And regarding my original supposition of tectonic plates causing convergence, that didn’t quite hold up either. When I tested the convergence signal against different variables, it tracked temperature change, not coastline change or land-area rearrangement. The plates matter because they cause climate volatility, not because of the geography per se. But that’s fine, close enough.

In any case, current warming rates are in the top 10% of anything the Phanerozoic has seen. If this theory is right, marine ecosystems today should be losing their regional distinctiveness and converging on a narrower job menu. That prediction is testable.

Sadly though I still don’t have a perfect answer for why Spinosaurus evolved its sail. But I could now tell him that the Cretaceous oceans it fished in were converging on a limited menu of ecological jobs - and being a 15-meter semi-aquatic predator was one of them.

What’s vibe research like

Now, back to the matter at hand, how do you do the research in the first place. the primary method in doing all this was to get and clean the datasource, which required plenty of manual looking-at-the-data and telling Codex this isn’t good enough. There was no substitute for actually looking myself, and LLMs ability to judge their own work remains remarkably bad.

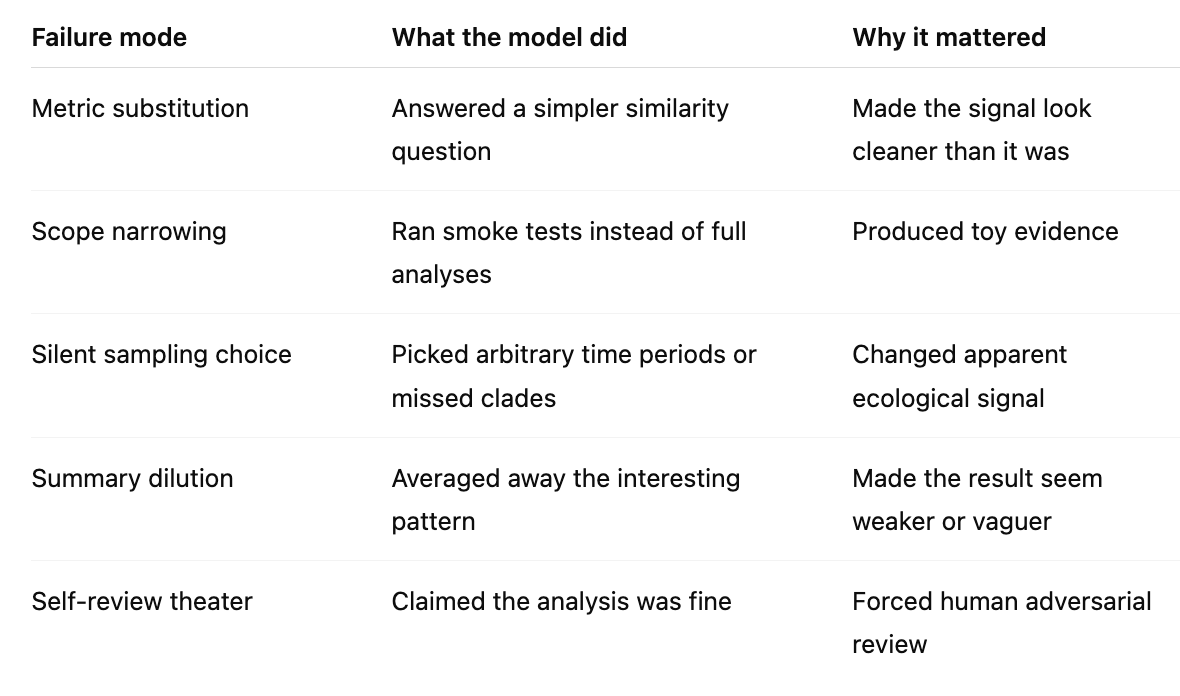

However, once you define a task well, they will go off and do it, almost no matter how hard it seems. But subtle errors can creep in here. Did it actually do the analysis you asked, or a simpler version of it that it thought might be good enough? Quite often the models were lazy and answered what it thought a simpler question.

Defining the tasks to be done is no easy matter by the way. Things always sound just so similar, but only when it’s done will it turn out to be different. There’s quite a bit of parsing a given plan to see if it makes sense, and even then sometimes it only makes sense after the plan’s executed to go back and say yeah, you did that wrong!

Here for instance the models missed some clades for some of the analyses, for unclear reasons, or chose random time periods often, again for no reason, or summarised the results diluting the signal in many (many) cases. Constant vigilance is essential!

They also constantly do things that you didn’t quite want but is a “watered down” version of what you asked for. The models just absolutely love mediocrity, cc Venkatesh Rao. They can’t wait to sand the edges off any crazy ideas you have, to make this just so much better caveated, to make sure you’re not over your skis and have someone call you out on something. They are eager to try a minimal version, to test something non-offensive, something unobtrusive, to get to a minimal working version, to create something that’s narrowly interesting. Zero boldness.

Agents also absolutely love doing smoke tests! Man, you ask it to do anything, it’ll do the simplest version of it to save tokens or some other reason and generally shy away from just spending the token budget and getting you the answer. This was really really irritating! I know people say automated researchers are coming but my god I don’t trust them right now!

If this was an area I knew so well that I could just define the endpoint and let it rip, things would be different. “Make sure you sweep the hyperparameters, the loss should be < X”, make it so! But how do I do that for a truly exploratory problem? The entire point is to do repeated experiments and to test what worked and what didn’t and to update the next step! I don’t know what’s next!

You also end up having to clean up the workspace regularly. Because the models also hate deleting anything (though, yes, occasionally it is happy to delete everything), and this shows up as an enormous surplus of temp folders, markdown files, one off scripts, visualisation jpegs, and assorted jsons. Getting it to clean up is roughly as hard as with my kids!

So you end up poking it regularly (like every one prompt to three or more) regarding whether it did the thing you asked for, what the results were, show it in a few different ways, write up a brief about it, did that answer the original question, if not what else needs to be done, and do this in a cycle.

I presume someone’s built an automated harness to do this, but I found there simply was no substitute for doing this myself, since see above I don’t trust the models yet. This entire field is new to me and this felt like starting off with getting a PhD. And even with that relying on the models to self-police or do research was remarkably hard!

Because you also do have to correct its presumptions a lot! It will constantly say some analyses can’t be done, or that some are a bad idea, and you have to stay on top to push it. Just pressing “yes” doesn’t help, especially in domains that aren’t like coding or running AI tests where they presumably have seen a lot more results.

Having multiple models helped a lot. Opus to review GPT and vice versa, to keep each other in check. Quite often it was a way to get a first couple reviews done before I could jump in and change course entirely, which happened at least ten times in the time I wrote this essay.

But I have to say, doing all this mainly from CLI and a chat window was so fun! Christ, this is the best way to learn anything. The hardest part was to read the constant walls of text I got back. I did a fermi-estimate that I think I read a Proust-worth of tokens back in this work. Well, skimmed. Received, certainly. It was a lot.

The result though, isn’t it remarkable? Any theory you have now is testable if the data is available. You really do have an analyst right with you to do whatever you want.

It’s brilliant, it’s indefatigable, it’s a little dumb, it’s annoying, it believes weird things, but it’ll do whatever you ask it to. And in the process of teaching it something, you get to learn quite a bit of something!

If any actual paleontologists are reading this, 1) please tell me if I got something right here, and 2) I would dearly love to receive my doctorate now, please and thank you.

As much as we all love vibe coding and are suspicious of vibe-physics I can highly recommend vibe-analytics. Among the roads not taken is doing a PhD in marine paleontology but happily we can still do the equivalent now outside the tower.

And in the meantime, you can go play with some data I made for the kids here, in a Paleontology Analytics website. The kids seem to think it’s fine, I’m inordinately happy with it.

Repo: Paleontology Analytics

For each 10-million-year slice, I compared pairs of marine regions. The x-axis was taxonomic overlap: how many genera they shared. The y-axis was functional overlap: whether their animals occupied similar ecological roles. The interesting quantity was excess functional similarity: are two regions doing the same ecological jobs even when they do not share the same genera?

Means we’re not in a Truman show for my benefit, and that I’m not being entirely glazed by codex