Artificial Life, Artificial Intelligence

.

I. The old dream

Ever since humans became humans we’ve wanted to play god. To create life. We had stories of golems, shaped with clay and with words put in their hollow skulls, “emet” meaning truth and if you wanted to turn it off “met” meaning death. From Solomon ibn Gabirol in the 11th century who created a female golem to do household chores (relatable) to Vilna Gaon who tried to make a golem as a child. Hero of Alexandria made intricate mechanical and hydraulic devices, self-moving figures and artificial birds.

The 20th century was no exception, except the golems were getting a bit more real. At this point you might not be surprised to find that John von Neumann, who seems to have a hand in discovering almost everything else, thought computers could simulate and create life! He had an idea for a “universal constructor”, a machine which could build other machines. He also created the idea of cellular automata.

The first ALife conference, the Artificial Life conference, happened in 1987. It tried to focus on softer versions, to simulate life on these newly created digital substrates. A first example was Conway’s game of life. It had simple rules that, if applied repeatedly, would result in complex phenomena.

There have been plenty of explorations of this which relied on crafting simple rules and noticing the complexity that emerged when you combined a starting condition with those rules again and again and again. Even the similarly simple algorithms that used some form of mutation and selection, inspired by biological evolution, would effectively do this. They thought that the basis of life was a firm set of rules and the complexity that needed to emerge was a matter of the correct set of iterations.

We’re surrounded by complex phenomena like this. Weather is governed by the Navier-Stokes equations for fluid dynamics, a deterministic system that becomes chaotic due to nonlinearity. The famous butterfly effect, as Edward Lorenz discovered when he rounded off one variable from 0.506127 to 0.506 in his weather simulations dramatically changing the outcome.

Wolfram created a new kind of science with this theory as its background. He saw it as a great way to think about the way computational complexity emerged from simple starting points. You can get to quite staggering complexity starting from simple rules that get applied repeatedly but seeing the final form it’s not easy to figure out what the initial rules were.

It’s probably fair to say this hasn’t quite worked yet. We learnt about self-organisation, emergence and some of the principles that underlie evolution. But the dream of creating life remains very much a dream.

II. Evolution without biology

Evolutionary algorithms were the other half of our attempts. If cellular automata said maybe simple rules applied repeatedly are enough to make complexity, evolutionary strategies said maybe you don’t even need to know the design, just make variants, select the ones that work, mutate them, and let the search do the humiliating work you couldn’t do yourself.

This really worked too! Evolutionary algorithms can discover strange hacks, controllers that make simulated bodies walk, antennae and circuits and neural network weights that no engineer would have written on purpose. CMA-ES is one of the mature forms of this: an evolutionary strategy for hard black-box optimisation, especially when gradients are not your friend.

Avida went further to digital organisms that replicated, competed for space, mutated, and evolved on a lattice. And you could see some of the things we associate with biology: parasites, robustness, weird contingencies, the sense that the system found routes through possibility-space that the programmer didn’t explicitly write.

Novelty search and POET type work noticed this and realised you needed to generate environments and agents together! The problem is not that evolution needs a target. Sometimes a target is the exact problem. If you optimise too directly, you walk straight into local cleverness and get stuck there. And in reality, the environments are not static, you coevolve with your surroundings.

Folks got quite excited about the possibility that this was the way to get to life in computer science. But the catch ended up being the same one. These worlds were very thin! The genomes were short, mutations were simple, the “bodies” were simple, the ecologies too narrow, and the objective functions not nearly complex or expressive enough.

I don’t think the lesson is that mutation and selection were weak. They were too strong if anything. They kept finding clever moves inside worlds that were not rich enough to keep rewarding cleverness forever. Maybe you needed evolution to happen inside an entire world, not just a pocket universe. Maybe this was the key difference. Artificial life had evolution, but not enough world.

III. The missing machinery

Real biology is obscene in richness compared to these programs. It is embarrassing how much machinery sits between a small genetic change and the thing we later call a trait, exploding in complexity the further you go up the ladder of abstraction!

Biology is just really really complicated and we understand barely anything. The smallest synthetic cell we have built, JCVI-syn3.0, had 473 genes, tiny by biological standards. And when it was made, 149 of those genes still had unknown biological functions! Even after we stripped a cell down to the minimum roughly a third remained a mystery.

Humans are worse. We only have around 20,000 protein-coding genes, and those genes are less than 2 percent of the genome. This sounds like it should make us simple, but it does not. The rest is regulation, RNA, splicing, chromatin, timing, cell signalling, tissue mechanics, development, and the body constantly being interpreted by the environment. ENCODE found hundreds of thousands of candidate regulatory elements in the human genome. You do not get a human by reading off a list of genes like ingredients on a cereal box.

A gene is not a trait. A gene is an instruction that gets interpreted by a cell, inside a tissue, at a particular time, under local chemical gradients, with feedback coming from above and below. DNA becomes RNA, RNA becomes protein, proteins regulate other proteins, cells interpret signals, tissues constrain cells, organisms modify environments, environments select organisms, and the whole thing loops until it all sort of works in retrospect despite the fact that maybe half the time it does not do the thing that we think they ought to do as a rule.

This is why for instance saying “mutation plus selection” is true, but thin. ALife borrowed the mutation and selection part. But we didn’t have anything as baroque as the substrate, where a tiny change could become a coherent body-level change because the system already contains a huge amount of inherited structure for interpreting that change.

Or rather, we didn’t have a sufficiently robust environment for the model to learn and evolve toward and within. This is the opening modern AI creates. A foundation model is not alive, but it is a learned prior over the traces of the real world. It has seen language, code, images, human plans, mistakes, objects, conventions, bits of physics, bits of biology, and all the ugly statistical residue of reality. In an evolutionary system, that could act less like the organism and more like the developmental machinery: the thing that turns small mutations into large, coherent phenotypic changes.

IV. AI

Now, turns out there was another way we could conceptualise creating phenomena with the appearance of life. The polar opposite of what we did with cellular automata. Rather than starting with rules and generating complexity, this starts with enormous amounts of complex data and tries to discover the underlying patterns.

All data encodes regularities and statistical patterns that reflect underlying structural laws that exist implicitly. And the trained network “absorbs” patterns from examples and eventually settles into a configuration of parameters that can generate behaviors consistent with those patterns.

It works phenomenally well! Many even think we have glimmers of consciousness already.

However, there is a problem with this. Compared to the first method, we don’t know exactly what the network learnt. It might be the actual underlying rules which give rise to the complexity we see around us. It might well be statistical patterns it has gleaned that create epicycles that don’t scale.

The success with language is what gives us pause now. Human language, which we thought a confusing mess, seems to have enormous redundancy and structure too. Their success is contingent on the kind of complexity found in real-world data being rich in patterns, not arbitrary and entirely random.

This also means that something which learnt to use language also learnt the types of language that’s mostly used, i.e., language not in a platonic sense but actually communicate whatever is asked.

V. Uncertainty

If you think of a deep learning neural net as a store of patterns emergent from training, not just from the data but from the derivation of the data, some even invisible to us, what does that tell you? There is a combinatorially explosive number of patterns it can learn.

This was Hector Levesque’s old worry: statistical learning can look like understanding long before we know whether it has actually learned the causal structure underneath.

What Douglas Adams wrote about tautologies comes to mind here:

“a tautology is something that if it means nothing, not only that no information has gone into it but that no consequence has come out of it”

The way we train these models is also a strange kind of tautology. Training looks circular, but the circle is not empty because the data contains structure, and the model architecture, objective, and representational constraints decide which structures can come out. The question is not only whether the model has compressed the world. The question is which compression it found.

Artificial life had evolution, but not enough world. Modern AI has world, at least enough of it, but no directed evolution. Maybe the next attempt at creating life comes from putting those two failures together. As with many essays the Hegelian synthesis points a way forward.

So if we can make a model act as a learned physics engine, a dense, lossy encoding of language, code, images, culture, and bits of the real world, maybe evolution can then operate inside that substrate: making small variants, testing them, killing the expensive ones, preserving the useful ones, letting specialists emerge, letting them merge, and so on?

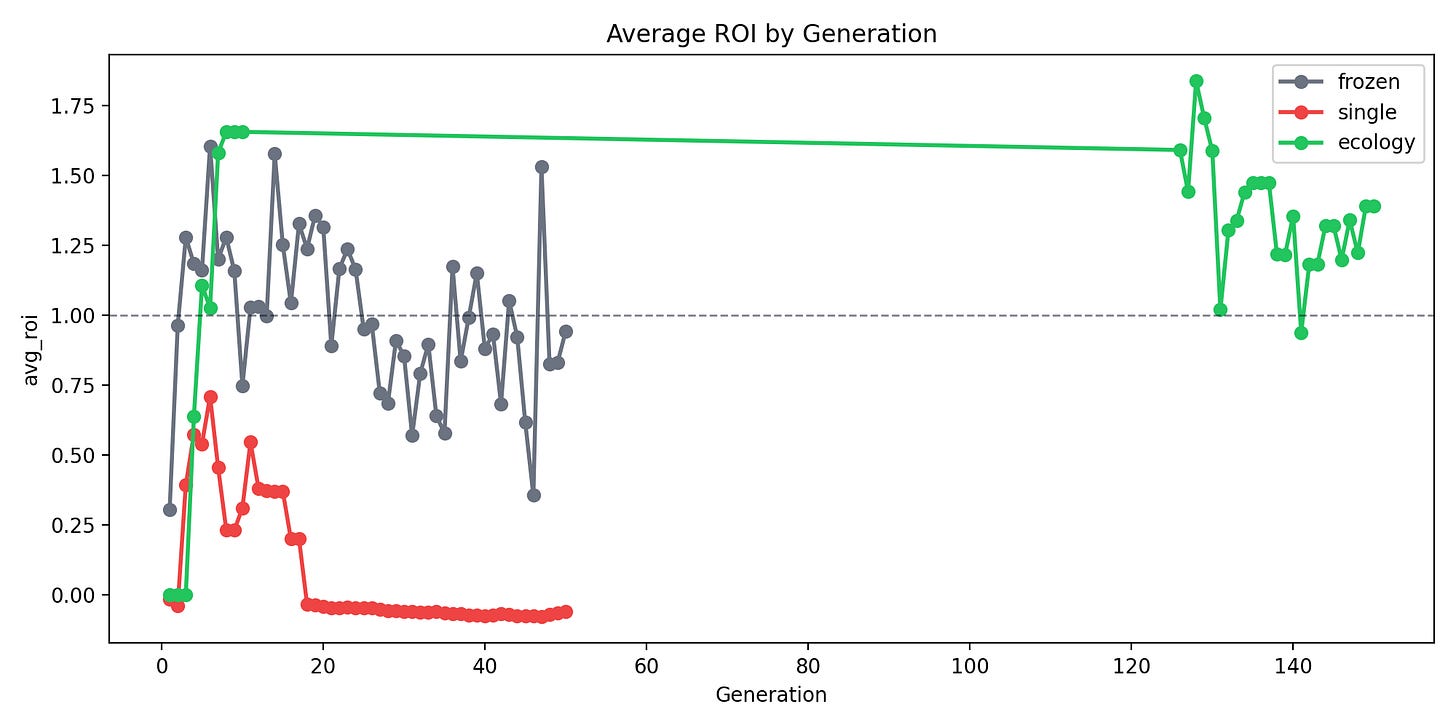

That was my conjecture. So I tried to test it with Evolora. Freeze a whole model as the world and let small LoRA adapters live inside it as organisms or organelles. Charge them energy for tokens, pay them for useful behavior, let bankruptcy mean death, profit mean reproduction, and successful adapters merge into offspring. I built it as a semantic Game of Life.

It is still at fun-toy stage and enormously fun. The tasks are constrained, the environment is constrained too. Open-endedness is yet to be fully proven at a large scale. But there are already little signs of life in the quasi-life sense: niches, mergers, energy pressure, specialists, routing, small colonies, places where an evolutionary portfolio seems more robust out of distribution than a single trained adapter.

Is this the future of artificial life? Would we be able to combine the best aspects of learning from arbitrary data and creating complexity from repetitive rule application? If the old dream was Talos with ichor in his veins, the new one is stranger. Maybe we have to evolve an entire ecology learning to survive inside a world we trained but do not really understand, not just one artificial creature. We have come a long way from clay, ichor, and homunculi. Not far enough to make life. But maybe far enough to build a better fake to learn from.