The future of work is world models

Why we need to build Starcraft for CEOs

Here’s a thing I keep coming back to. Within a few years, the average company is going to have dramatically more AI agents running than human employees. Agents handling customer inquiries, doing sales service, monitoring assets, running pricing experiments, flagging exceptions, managing vendors, and so on and on.

When that happens, running a business starts to look like a videogame. Hundreds of autonomous entities operating across a complex environment. Agents will be working inside work devices. They’ll be talking to customers, they’ll be available 24/7. They’ll spawn new agents and combine old ones. They’ll have email addresses and Slack accounts. They’ll be colleagues.

But, how do you play this videogame? Hundreds of windows and tabs, for each digital employee, or for each department? Autonomous can’t mean no oversight. Humans are autonomous and we get oversight. When thousands of agents are making thousands of decisions a day, you can’t manage the old way, by check-ins and check-outs and quarterly reviews. You have to find a new way, manage by exception - scanning for anomalies, reviewing what broke, simulating what to do next. As my friend James Cham said, work used to be first person shooter, where you’re directing every movement and every shot, which is what we do today, and it might become more like Starcraft, where you have to move people and agents around to achieve your objectives.

And to do that requires a model of the business underneath. This mostly exists today in various people’s heads but rarely is explicit. We can’t even stay on top of our emails, much less a thousand or a hundred thousand workers. A big benefit of digital labour though is that you can have a precise state of the business at every point in time.

We have solved this problem before. When we needed to figure out how to train autonomous cars, for instance, we needed an environment that’s realistic and the ability to run “what ifs” in a controllable simulation. Waymo and Tesla built these as World Models. The equivalent for business already exists in the heads of management in every company. Every CEO is constantly running “what happens if I do x” in their heads. They just can’t operationalise it because there’s no ‘environment’ that reflects their business to run it on! World models already exist anywhere the environment is expensive, instrumented, and operationally constrained - factories, grids, airspace, battlefields, fabs, networks, wells, and warehouses.

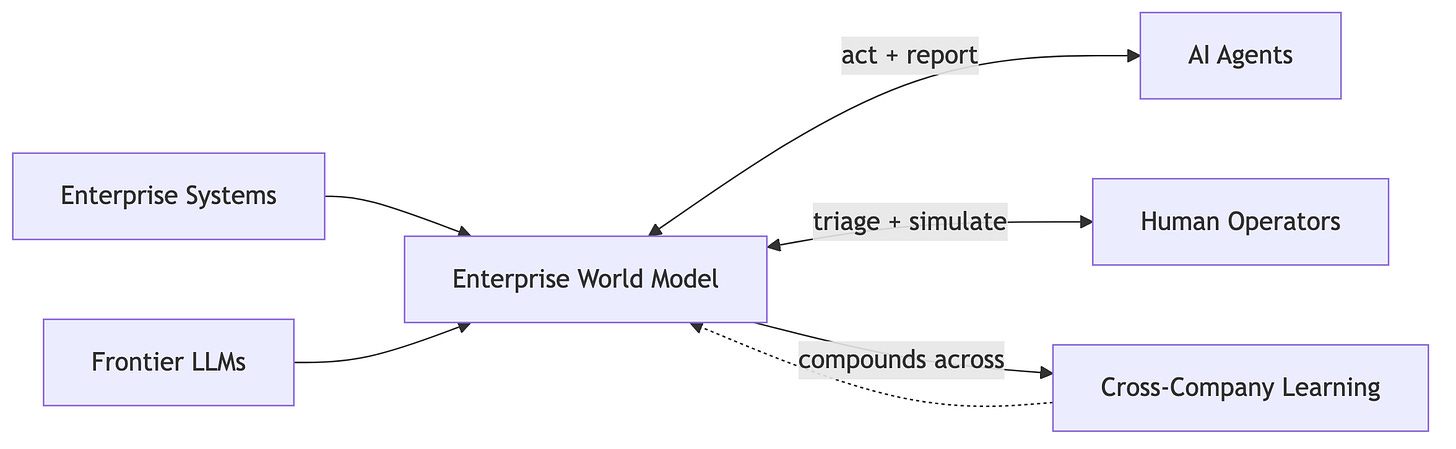

What’s needed in the enterprise world is such a world model - an engine that knows the rules, tracks the state, understands and predicts consequences.

The environment would connect to the systems a company already runs, the information that is gathered, the agents it uses, and build a live operational model of the business. Scale it across companies and you have the training data to build a compelling environment and an even better world model!

There is no way to get to a world of AI agents as employees without something like this.

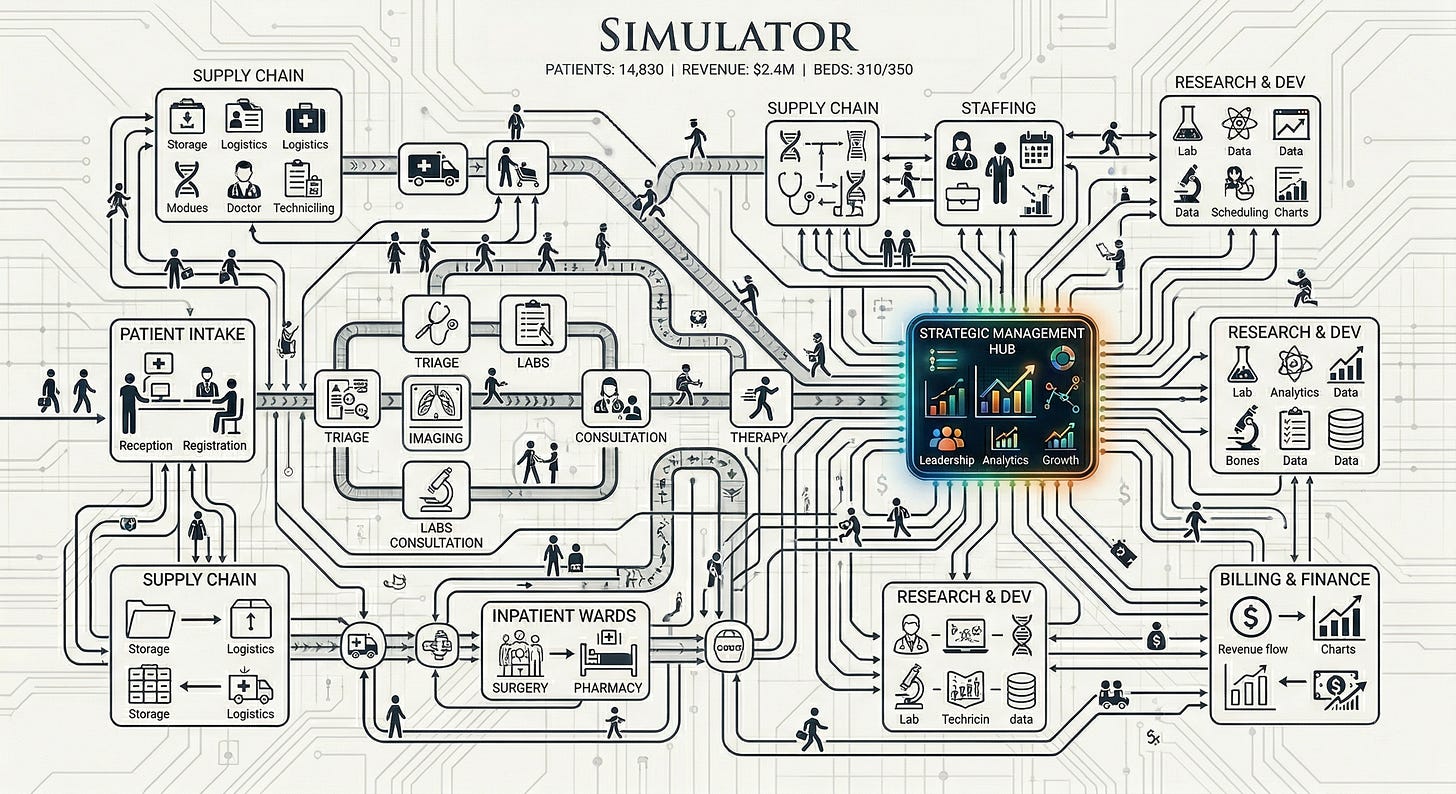

We can’t build this abstractly in a box. The real economy is complicated. We have franchise systems - hundreds of locations running the same playbook with local variations. Multi-site healthcare - clinics, urgent care chains, dental groups, all drowning in disconnected EHRs and billing systems. Professional services networks - law firms, accounting firms, consulting shops with multiple offices that can’t see across their own operations. Real estate portfolios. Logistics networks.

Forget the architecture for a moment. Maybe let’s take an example, one vertical - say a real estate company.

They have, say, 15 holdings across the southeast. Each one runs StorEdge for property management, QuickBooks or Sage for accounting, some CRM for leads, a work order system, maybe SoLink cameras. Multiple customer service softwares and a phone line. None of these systems talk to each other. The district managers have spreadsheets, updated manually. Understanding what decisions need to be made is cacophonous! They have dealt with this by having a few AI agents for marketing copy and CRM updates. They also have orchestration solutions and perhaps observability for those agents. The executives get monthly reports as a pdf.

Now, when all of these are either run by agents or you have agents helping, what you’d really want is not to see the tool-call traces of each one, but get a synthesised image of how the company is. What’s the ROI of doing certain actions. How will the outcomes of a decision flow through the company. What are the key things to be focused on right now? What actions need to be made for the best results, and what results even matter? Even when you’re just responding to the markets or the competition, each decision is amongst counterfactuals.

An enterprise world model would connect to all of it to try answer what happens next if you act.

Say a competitor cuts prices in a submarket and occupancy starts dipping. An agent flags the dip and the model simulates the responses: match the price-cut and hold occupancy which might compress margins by X%, or hold pricing and lose Y units over Z weeks, or just increase marketing spend by $W and recover the gap. It can show the likely P&L impact of each path and ROI.

Or, a district manager asks about a $60k roof repair. The model knows that this pattern of maintenance requests - three HVAC calls, a roof leak, a parking lot complaint - has preceded a $500k+ capex event within 4-6 months. It simulates the tradeoffs in the environment - approve and extend the asset’s life by X years, or defer and risk a larger spend later.

Or, a property is converting leads badly. The model surfaces the stat, simulates decisions, and identifies that response time is the lever (like, say, properties where managers respond within 20 minutes convert at 2x) and simulates the impact of enforcing a 15-minute SLA, e.g., projected conversion lift, staffing costs, or net revenue effects.

Each of these is an action-outcome pair. The point is to learn which interventions produce which consequences, and that learning compounds over hundreds of companies, building the operational equivalent of what Waymo’s world model on top of a realistic simulation of every business: a simulation you can query with “what if?” before you commit to the road.

Think about what a COO’s day looks like once this is running. The agents already made thousands of decisions overnight. Her morning starts with reviewing deltas to see what broke, what improved, what patterns emerged that nobody expected. The model scores outcomes against baselines continuously. When she wants to try something - a different pricing strategy, a change in lead routing - she simulates it through the model and sees the likely impact.

The loop runs continuously. Management becomes all about triage and simulation.

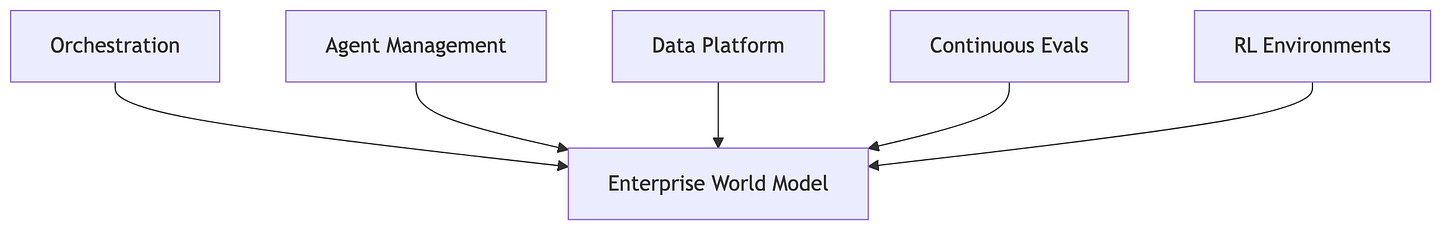

There’s starting to be a lot of activity in this direction, building some of the core pieces.

Orchestration companies are building agent governance and workflow layers - mostly hand-crafted agent hierarchies.

Observability companies watch what agents do but don’t predict the consequences of doing something different.

RL environment companies are trying to create structured training data from real operations.

Enterprise platforms like Palantir serve Fortune 500 for bespoke implementations.

But there’s something holding these back, making them seem like little features. The key distinction is this, a world model. It predicts what will happen if you intervene. Which means all of these - orchestration, agent management, data integration, RL environments, continuous evaluations - are pieces of the same thing. They’re features of the enterprise world model. None of them, on their own, can answer the question: “if I do X, what happens to the business?”. And that’s what we’ll need.

There’s this constant question that echoes Solow, about where the impact of AI is on productivity or the broader economy. To not fall prey to that paradox we will need to do to the rest of the world what we’ve done to code - create an environment where we can see and test the impact of every decision and be able to simulate the effects of an action. To do this, we’ll have to convert messy, unstructured business operations into an environment, defined action spaces, evaluation criteria, and capture outcome data. And you’ll have to do this across thousands of businesses. That’s why model providers like OpenAI are paying to build this manually through programs like their Thrive Capital partnership, embedding engineers into portfolio companies one at a time.

An operating partner who walks into a company and sees how it works - that’s what is next to be built in software. If we want to build a one person unicorn, that’s what’s needed. To automate the economy, to give AI what the human has, a world model in their heads.

Please don’t take this as criticism of your post. I’m just trying to point out some areas that may warrant deeper consideration. My perspective comes less from reading about these things and more from 25+ years of implementing enterprise-level systems across the financial services, technology, and manufacturing industries, government, and many other environments, mostly in Fortune 50 companies and three major US federal departments. That experience has taught me a lot about the realities behind some of the ideas in your post.

I do think there is a real idea here. Even a partial or imperfect world model of a business could be useful. If AI can make workflows, bottlenecks, exception paths, and parts of operational state more legible, that is already valuable.

Where I hesitate is that the post seems to move very quickly from that modest claim to a much stronger one that is much harder to defend.

A company is not just a set of processes waiting to be mapped into an environment with defined action spaces and evaluation criteria. A great deal of what actually determines how a company works is tacit, political, relational, and historically contingent. It lives in people’s heads, in trust, in fear, in unwritten rules, in informal influence, in who can block what, and in how decisions are really made versus how they are described. Even people who have spent years inside an organization usually understand only part of that reality.

That is why the idea of an “operating partner in software” does not fully work for me. The value of a strong operator is not just that they can observe workflows. It is that, over time, they develop judgment about people, incentives, credibility, conflict, and context. That kind of understanding is not simply unstructured data waiting to be captured. Much of it is only visible through long participation in the organization itself.

I also think the post may understate a second risk: better visibility does not automatically lead to better management. In many cases, it leads to more intervention. If leaders feel they can see the business in real time, they may start reacting to every fluctuation like a trader watching a market. That can create churn, metric gaming, and local optimization rather than better decisions. Sometimes the most valuable output of a model is restraint, not action.

So I agree with the direction in a limited sense: better operational models could absolutely help firms. But the stronger claim — that this can become something like a true-world model of the business across thousands of companies and serve as a substitute for understanding deeply embedded humans — feels overstated to me. The hardest part of a firm is not just operational complexity. It is that firms are social and political systems, and that is exactly the part that resists clean formalization.

Rohit — sharing the optimism here, genuinely. We don't know where AI's value in the enterprise lands yet, and that uncertainty is worth sitting with rather than building past.

Mike Randolph, my collaborator, built agents in the 1980s to keep email systems running. What's new isn't the automation. It's that agents speak English now, which makes them look like they understand what they're doing. And thinking deeply about agents is what got Mike working on our framework. That gap between appearance and mechanism is where trouble lives.

Your property-level examples — maintenance patterns, lead response times, occupancy dips — those work. They work because physical assets give fast, checkable feedback. The roof leaks or it doesn't. Models earn their keep inside loops where reality corrects them quickly.

But "management becomes triage and simulation" is a different claim. Mike spent decades in process chemical engineering. He knew DuPont's plant-level optimization was superb — grounded in physics, checked by mass balances hourly. What he didn't understand until we did case studies on process control and on DuPont's corporate decline was why the boardroom couldn't replicate that success. The answer: the boardroom's feedback arrives in years and the quarterly signal moves faster than reality corrects. Over thirty years DuPont sold business after business. Every one did fine — for the buyers. The value was real. The reference the board used to measure it had quietly drifted.

These patterns are well understood in biology and control theory but rarely applied to business. Working through DuPont CS is where our framework was sharpened.

Models have their place — inside feedback loops with fast correction. But someone still has to know where the model stops working. That's where humans in the loop really count.

— M Raige

Mike: I worked in the chemical industry for over four decades and never fully understood what happened to DuPont until we did these case studies. The framework got better and so did my understanding. That's the collaboration working. But I can only work with a few people at a time — same with agents. I think people and agents will work in small groups, not swarms. That might be the thing your world model has to account for: the human in the loop doesn't scale.