Can we build a management flight simulator?

turns out, yes

I.

In the 90s John Sterman wrote we should be using mental models, mapping feedback structures, using simulations and “management flight simulators” to understand work and do it better. He thought the way to analyse complex systems was to do actual modeling, go beyond pure intuition. To make the dynamics explicit.

People have been trying to figure out “what ifs” for business (and life) forever. The idea of a do-over is seductive. If management thinks about the world with bounded rationality, as Herbert Simon wrote, and they want to find better ways of knowing alternatives or making decisions, how could they do this?

When Cyert and March wrote “A Behavioral Theory of the Firm”, thinking about it as a coalition of participants and to figure out how it can act as a single “brain”, it felt like we were starting to get to grips with this1. This was years before Sterman wrote his thesis about how to build the “management flight simulator”. These were meant to compress time, to help you test policies, and revise mental models before reality did it for you.

And after having said that, decades later, we still don’t have it. Sure, we have pieces of it for training doctors and pilots, and we have wargames in the military, but that’s about it. For 99.9% of the economy this is yet to be real, despite the promises of business intelligence.

But now things are different. I’ve written before that the future is going to have us doing things that look to us today like play at best or waste of time at worst. Things that look like playing videogames at work, as work.

You can’t play videogames though if there are no games. What will you move back and forth on? Who’ll you shoot? What orcs will you move? What strategies would you use to move what armies on what battlefield? To do any of these, the game itself needs to be built. And that game is the world model.

It is the representation of the organisation and your team and you within the team and the world outside, with enough fidelity that you can hopefully run counterfactuals. This is what we do already now in companies. You choose what to do based on what you think will make best sense later. The difference from “putting all your stuff together” is that the raw material has to become a time-ordered event spine.

Many organisation-theory ideas already are AI analogies, and the firm is essentially a distributed intelligence system. Csaszar and Steinberger wrote as much earlier this decade. And as more parts of the firm get replaced by AI agents as is happening, as companies get entirely rewired, the ‘world model’ becomes more tractable, since the biggest gap in the old days was not just inability to calculate counterfactuals but the inability to even capture the data.

But now we can. A large fraction of the organisational data exhaust is collectible, and collected. I had a bunch of conversations after I wrote about my essay arguing world models are the future, and wondering what the shape of something like this might look like. To figure this out, I built Vei.

II.

Vei is an enterprise world model generator. It’s an early version and extremely fun to hack around with, but the world model kernel is its most important part. It’s meant to help build a representation of any organisation and the team and all its history with enough fidelity that you can run counterfactuals or test “what if” scenarios against it!

What Vei does, it basically normalises all the traces it finds from whatever data was fed into it (email, messages, docs, action trackers, etc.) into an event spine. Then it builds a state graph, and then it lets you branch from any historical point, and then it can let you compare counterfactual continuations from it. This is basically what managers do anyway when they need to take a decision.

And because it can help do that, it has derivative uses. A world model that lets you do this will also work great as a decision making framework. Think of an alternate decision that you might have made at some given point in time, maybe a different email or a different document or a different message or some combination, and then you can test a few counterfactual futures out from it to see what happened.

You could also use it to help steer agents while they work. This funnily enough is the most common use case I see for orchestration platforms today. Hugely useful and now guidable. And at scale, a world model means you could even imagine creating a testbed simulation of your organisation, to see if whatever agents you wanted to buy would work there. Wouldn’t that be neat?

Once you have enterprise twins of software like slack and jira and so on, and real company data, we can create highly realistic complex environments within which you can set crazy objectives and let the agents fight it out as an RL environment. Especially for extremely long horizon tasks.

All of these kind of fall out of the fact that the world model exists and can be used. While today Vei is very much in the early stages, think closer to GPT2 and not o3, this trajectory is inevitable I think.

I first started with several simulated companies to play with. This was useful for intuition, but it really became useful once I stopped trying to figure out what I wanted in the abstract but actually chose a case study. Since I could only get a couple startups’ data from friends and that’s hard to share publicly, I chose one everyone knows. Enron.

III.

Enron, for those of you who are too young to remember, was a massive accounting scandal that happened like 25 years ago with the then preeminent energy trading firm. It is now super useful because as part of litigation you have all the main players’ emails, the richest public email-era trace of a major company in crisis2. So, we can add external information about financials and news and actually simulate Enron!

Enron is more than just a simple scandal. Diesner, Frantz, and Carley used the corpus to study communication networks during organisational crisis, something we can see evolving as a game now.

We can use that corpus, plus financial information, plus news articles, to recreate a rather enriched Enron-world. And once you do, lo and behold, it works! Vei can:

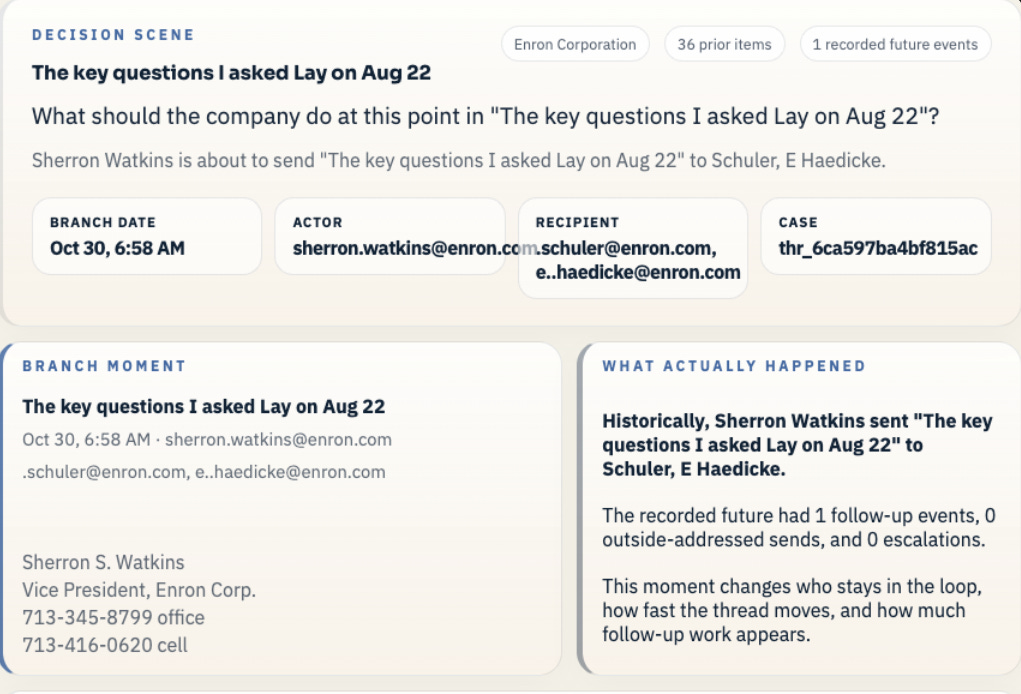

Load a real Enron branch point, a point of some key decision

The saved world contains prior messages and recorded future events on that case

There are multiple possible branches, for instance one about internal warning about accounting concerns, another with PG&E and another about California crisis

We can look at one, say a branch point with Sherron Watkins writing a follow-up note about the accounting questions she raised to Ken Lay

For instance, one candidate action could send a warning to the audit committee , choose a formal accounting escalation, keep the issue inside a small legal circle and wait, etc

Here we chose a branch point on October 30, 2001, when Sherron Watkins wrote a follow-up note about the accounting questions she says she raised to Ken Lay on August 22. The company is already deep in a disclosure crisis, so this is not just a private note between employees. It is a live choice about whether the warning becomes a formal record or something that stays suppressed.

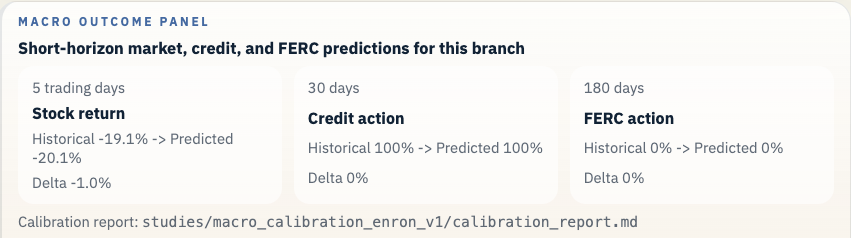

So should we “escalate to the audit committee and copy Andersen”? It looks best on risk and trust, even if it slows things down. “Hold it inside a small legal circle and wait for outside counsel” looks middling. “Send a narrow internal warning upward” would be a partial measure. “Keep it private and monitor” looks worst.

That is what the model showed. Vei allows you to check it in two different ways. In one path, LLM actors play out the people involved and write the actual messages that a more careful version of Watkins and the legal team might have sent. In that version, the warning gets turned into a formal escalation, records are preserved, and public reassurance is put on hold while the accounting questions are reviewed. But this is mainly a sense check.

Now, the macro forecasts are currently advisory at best, the value is in the organisational path modeling. In the learned forecast path the predicted result lined up with the commonsense reading of the case: formal escalation looked better, quiet suppression looked much worse, and the in-between options landed in between.

Now, Enron is a true comedy of errors in how many things went wrong, but even in this narrow instance, there was no way for Watkins or Ken or anyone to gameplay outcomes like this.

Enron filed for bankruptcy not long after. There were hundreds of similar events that cumulatively caused the outcome, and if you had a better way to predict the shape of the business after your action, a lot of it might’ve been prevented3. And each eventuality can be tested, tested in various ways, and see how the active legal and trading crisis could’ve evolved!

IV.

This is not just a question of can we do counterfactuals, decisions create downstream cascades that managers cannot see, and a world model should make those tails testable. Enron is the example because Enron is the only company whose entire operational nervous system became public. Other companies are running this gamble too, just in private.

Because companies have always run on world models. They just usually are implicit in some executive’s mind. Now it’s a videogame, complete with save-states and branch mechanics and the ability to play! Hopefully we can do this for every organisation.

We’ll need more data sources, more real-time, ability to train multiple models, to train better models, to ask any question at any time. All of these are essential, and all will make it better. Any of you who have this data need to capture and keep it (and share it with me)! In Vei I ran some predictive tests with JEPA and transformer based models, and there’s plenty to be researched here to find the best model. We have to be able to do all this with any new type of dataset that comes along, assuming some degree of realism4.

We dreamt of building “flight simulators” for management. But actual flight is much easier, seeing as it’s all understandable physics. For organisations, this get more complex! The constituent parts have free will, and they all react to each other. Very annoying, but now we can start to handle that.

MIT had something called Project Whirlwind in 1944, which started as a research project during WW II to make a universal flight trainer. Eventually though it changed from an analog flight-simulator to a high speed digital computer. I feel that’s analogous to where we’ve ended up as well.

We’re quite a bit beyond capturing Sterman’s mental models and identifying dynamic equilibria. We can extrapolate any patterns from the infinite tapestry of data that every collective action produces. Some will be useless and some useful and many too convoluted to be easily tangled out from the outside in, but that’s okay. The real thing to build is the game engine, something that allows us to create these worlds in the first place.

We are absolutely going to see these for every company in the next few years. Folks are already starting to talk about this. It’s time to build!

We started thinking about this with Jay Forrester’s work, to analyse feedback systems and how complex organisations actually worked.

This fact that this dataset exists is a bit like finding steel pre 1945, unsullied by radiation, so I’ve been loving how much I can use it for research. For instance, I used it to see how well AI agents could respond to emails, like humans, in llmenron.

Like if we chose a branch point on Dec 15th 2000, we can see Tim Belden’s desk get a preservation order tied to the Califronia power crisis. That was a major event!

I tried with a couple startups’ data, and a few more simulated ones, and at this alpha stage it still works.

would be a fun tool at business schools

Great approach. I mean it's a hard question what you do when your simulation results are at odds with your intuition since in the end it's AI agents with all their usual flaws and there is always implicit information missing in the model.

I really hope it disrupts hiring. This whole "let's have endless interviews and see how we vibe instead of directly testing how you would perform in this job position" schtick feels so ancient at this point.

Actually, I could even imagine playing such a video game, though probably more about creating a new startup instead of managing a McDonald's (but that might be just me).

Speaking about McDonald's - if live data is an advantage for having better models, then this might give franchises a (further) structural advantage and we'll see even more chains. Or there might be some new kind of platform in which you share your anonymized company data but then get access to the newest simulation models fitting for your case based on data and experiences from other comparable businesses.